There are numerous text-to-image generation models available in the market right now. Stable Diffusion by Stability AI is unique, for a number of reasons.

Unlike its competitors, it is entirely open source and you obtain the right to use all generated images, including commercially. Stability AI offers for free what other companies like OpenAI and MidJourney typically charge for. Additionally, Stable Diffusion offers more customizations while generating images than its competitor DALL-E2 (by OpenAI). Lastly, Stable Diffusion also boosts that it creates a lower carbon footprint than its competitors.

Stability AI aims to “democratize” AI, i.e. make AI accessible to everyone as opposed to only a few that can afford to pay for the technology.

What is Stable Diffusion?

Stable Diffusion is a text-to-image conversion model developed by Stability AI. This deep learning-based model can produce hyper-realistic images in response to any given prompt. Stable Diffusion produces images using “latent diffusion” technology and is extremely efficient. Stable Diffusion is said to be 30 times more efficient than its competitor, DALL-E 2.

You can use Stable Diffusion locally on your computer by accessing their open-source code, which is available on GitHub. Stable Diffusion is not very computation heavy and doesn’t need extensive hardware.

How does Stable Diffusion work?

Generally, text-to-image generation is done using a combination of deep learning algorithms and a neural network that is trained on a large dataset of images and their corresponding text descriptions. A combination of visual components from the training dataset and imaginative components produced by the model is used by the model to process the input text and produce an image that is consistent with the description.

Stable Diffusion separates the imaging process into a “diffusion” process at runtime and uses it to generate images. It begins with just noise in the image and gradually improves it until it is noise-free, getting closer to the text description as it goes.

What can stable diffusion do?

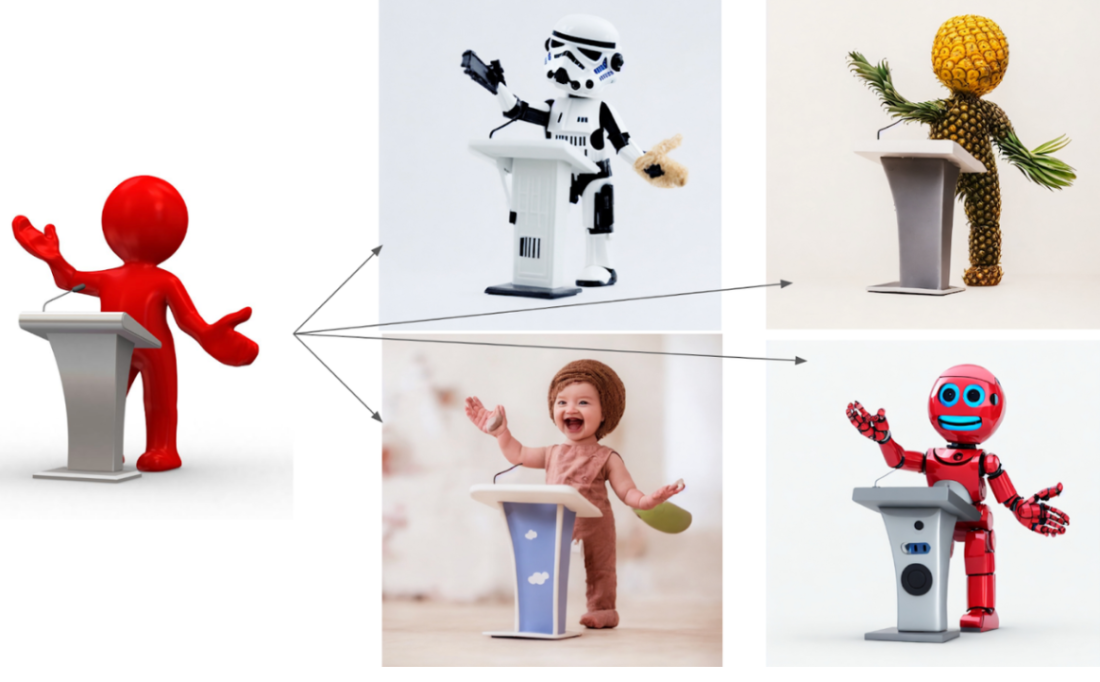

Stable diffusion can be used to almost instantly create images based on any prompt. The prompts don’t necessarily have to make sense; they can even be something imaginary like “an astronaut riding a horse on Mars,” and boom, you have a result that resonates exactly with the prompt.

With stable diffusion, you can even create artwork modeled after the style of well-known painters like Vincent van Gogh or Leonardo Da Vinci. Once you learn the commands of the Stable Diffusion algorithm and know how it processes text, you can create extremely realistic images or even oil paintings or sketches.

You can also feed an image to stable diffusion, and it will process the prompt and generate results based on the input image and the prompts. With proper knowledge of writing prompts, you can manipulate images as you like.

What is new with the latest version of Stable Diffusion?

The most recent version of stable diffusion, stable diffusion 2.0, was released by Stability AI on November 24, 2022. It has the following improvements:

- Compared to the previous versions, Stable Diffusion 2.0 can now produce images with default resolutions of both 512×512 pixels and 768×768 pixels.

- Stable Diffusion 2.0 also includes an Upscaler Diffusion model that enhances the resolution of images by a factor of 4.

- Depth-to-image diffusion model: you can manipulate any image (which you provide to stable diffusion) into your desired type of outcome, as shown below.

- Updated Inpainting Diffusion Model: you can add customizations to any picture, for example adding a mustache to any face or adding a Christmas hat on top of your dog’s head.

What are common uses for Stable Diffusion?

Stable diffusion has a number of potential uses, including:

- Improving the efficiency and accuracy of image generation tasks, such as creating product mockups or visualizations for product research

- Providing new creative opportunities for artists and designers, leveraging their creativity to generate unique images quickly and efficiently, which can then be tailored

- Facilitating the creation of personalized images for marketing and advertising purposes

- Democratizing the creative process for individuals who do not have the fine motor skills required for art or graphic design

The above uses can be incorporated in various fields, like:

- Advertising: ads and pitches can be created using Stable Diffusion.

- Architecture: Architecture abstracts created by Stable Diffusion can be derived into beautiful ideas.

- Fashion: Stable Diffusion can create some amazing ideas for innovation in the fashion industry

- Film: Screenwriters can now present their ideas to set designers in a pictorial format, which makes the process extremely easy

- Logo design: Attractive and professional logos can be created with Stable Diffusion

Conclusion

In conclusion, Stable Diffusion has shown promise in its ability to create realistic images based on input text descriptions. This technology has the potential to improve the efficiency and accuracy of image generation tasks, as well as provide new creative opportunities for artists and designers, as well as for use in everyday life. However, there are also concerns about the potential misuse of this technology, including the creation of fake or misleading images. As AI progresses, it will be critical to address these concerns and ensure the responsible and ethical development and use of text-to-image generating AI.