When someone mentions the possibility of machines replacing humans, the first thing that usually comes to mind is robots replacing humans in low-skill, heavy-lifting jobs, boasting greater efficiency and accuracy.

The most straightforward example of this is factory workers who have been replaced by robots that can efficiently work 24/7 without needing a break, food, or pay and that are programmed to never make mistakes.

While this use of artificial intelligence is beneficial for corporations when it comes to maximizing profits, it has put many human workers out of work. With the introduction of DALL-E 2, many have started wondering if the same fate awaits artists. Will DALL-E make or break human artists?

Don’t worry, this article isn’t going to paint the future as a dystopian society, sprawling with robot sentinels and human beings living in fear!

While many see AI as a threat to humanity, its creators think otherwise and see the technologies as tools that will help humankind achieve even greater things. Released in 2022, DALL-E 2 is one amazing example of the futuristic tools being made today.

How does DALL-E 2 work?

DALL-E 2 produces high-quality art, without human invention, based on the prompts provided.

DALL-E 2 is a two-part tool: Clip and Diffusion.

The “clip” is the main brain behind the tool. It’s based on a machine-learning model that has created an overlap of related images and tagged keywords, using millions of images and text descriptions found on the internet.

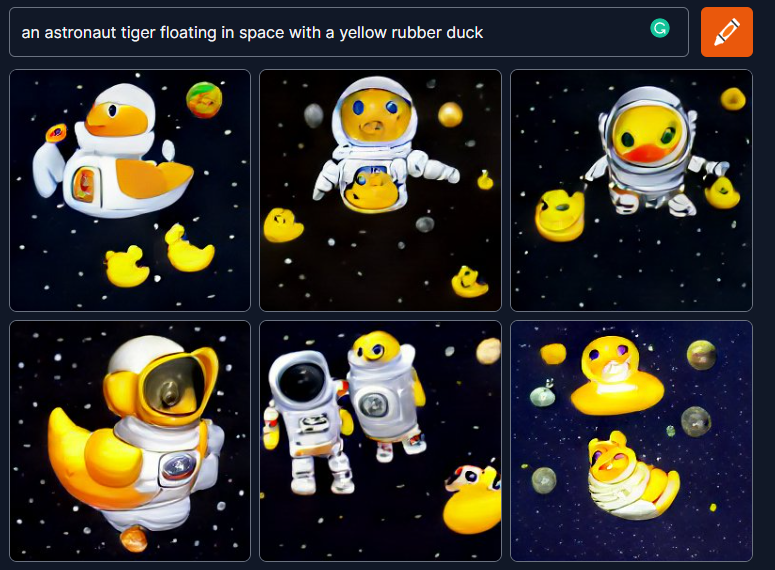

The “database” contains several pictures culminated by OpenAI and classified according to the description. It uses millions of parameters that allow it to make out what an image is supposed to convey to a human viewer. Equipped with a basic understanding of the human thought process, DALL-E 2 can now work out a general outline of what a human expects when they type out, say, “an astronaut tiger floating in space with a yellow rubber duck”.

Next, it spits out a canvas of random colored graphics and then uses the diffuser to “diffuse” them into a couple of images that it thinks best describe the prompt.

Hence, it returns photorealistic results that make our jaws drop. Now while this may look like a jumbled up and random image generation process of collaging up multiple image snippets, the more exciting part is when it is able to replicate the art styles of certain artists.

What can DALL-E 2 do?

At this point, DALL-E 2 can create almost anything the user can dream up.

Want to see what the modern-day New York City skyline would look like if Vincent Van Gogh (who died in 1890) painted it? No problem: done! With pretty incredible results, if I do say so myself (being a huge Van Gogh fan who has even visited the Van Gogh museum in Amsterdam).

This is because the technology doesn’t just pick up parts from Van Gogh’s paintings and stitches them together. Instead, it first outlines what a modern city looks like and then draws inspiration from Van Gogh’s previous work to create an entirely unique piece. Basically the same way a modern-day artist would in his/her own mind and eventually onto the canvas.

This technology can even blend art styles to create art one could only imagine. This opens up a whole world of possibilities, and this is where the threat to human artists lies because human artists have a number of limitations: two big ones being time and artist styles. We all know humans are bound by time. From an artistic perspective, most artists are only proficient in a couple of styles, not all artistic styles.

DALL-E 2 can also edit and retouch photos very accurately with nothing but a simple text prompt, using something called “in-painting“. It uses AI imagery to select parts of the image and replace them with a new piece that flawlessly integrates into the picture. This can assist those in the photo editing business by drastically cutting down on the problematic parts of altering digital images.

How could it be used by companies and individuals?

The threat to artists is undoubtedly real, but I believe artists can actually leverage this tech to create even more.

Generally, I think most of us would agree that creative artists do not think the same way as the rest of us. This tech gives new skills to ordinary people and superpowers to artists.

Basically, any work that involves art or content, especially those with very specific requirements, can be quickly mocked-up with the help of AI.

Take content makers on Youtube, for example. Making a video is an enormous effort. But to actually attract people to watch is another huge task, which can take more than just one person. And how does a viewer get to the video? By looking at the thumbnail and the description. If these two things are enough to intrigue you, no matter the content of the video, the creator has already won.

Successfully getting your attention is the biggest goal of any content creator, regardless of medium. And often, it requires a single eye-catching image to set it off. While a YouTube creator may be a great actor, singer, or comedian, he or she is probably not also a great graphic designer who can create thumbnail images to promote her content.

DALL-E 2 will enable her to also do that easily and quickly.

Do we need DALL-E?

All of this brings into question the need for a 100% AI-based tool to generate never-before-seen images.

Perhaps we could go as far as asking, should something like this be allowed to exist?

One of the biggest criticisms of AI-generated images arose when a human submitted an image generated entirely by another AI tool called Midjourney and went on to win a fine arts competition.

While the author argued that he had given it sufficient credit, others say it’s pretty unlikely that a judge would even consider the possibility that the art entered was not produced by a human.

It’s worth noting that currently, tools like DALL-E 2 are not entirely available to the public (although one can do some limited generations with it), so as not to contaminate the early data set. At the time of writing this article, OpenAI has a waitlist for people looking to work with DALL-E 2.

This is important as the model is still in its early learning stages, and potential harm could be done using malicious data/prompts.

What are the risks associated with DALL-E 2?

Following the risk of corrupting DALL-E 2 closely is the threat posed by the ill-use of DALL-E 2. Misinformation is the foremost risk, as creating fake news (including images to potentially support claims) could be a click away.

DALL-E 2 has the potential to spark significant controversies and give forensic teams a hard time as its accuracy is getting better day by day.

The other great risk is to artists, whose careers may potentially be affected. But it is now up to them to hate this tool or use it to their advantage and create unique art pieces. They can also use it to test their limitless creativity in a fraction of the time, thus maximizing their outputs.

What is next for DALL-E 2?

DALL-E 2 is one of the numerous research projects currently worked on by OpenAI, initially founded by Sam Altman.

This is just the beginning of their primary objective: “to ensure that artificial general intelligence (AGI)—by which we mean highly autonomous systems that outperform humans at most economically valuable work—benefits all of humanity.”

This is a very noble goal since the race for AI has started, and the welfare of humanity is not always the spearhead of some other AI-related projects.

This is one of the reasons why DALL-E 2 is not readily available to the public. OpenAI has plans for future iterations of DALL-E, which will focus on more accuracy in less time.